This chapter introduces and critically reflects upon some key challenges and open issues in Human-Robot Interaction (HRI) research. The chapter emphasizes that in order to tackle these challenges, both the user-centred and the robotics-centred aspects of HRI need to be addressed. The synthetic nature of HRI is highlighted and discussed in the context of methodological issues. Different experimental paradigms in HRI are described and compared. Furthermore, I will argue that due to the artificiality of robots, we need to be careful in making assumptions about the 'naturalness' of HRI and question the widespread assumption that humanoid robots should be the ultimate goal in designing successful HRI. In addition to building robots for the purpose of providing services for and on-behalf of people, a different direction in HRI is introduced, namely to use robots as social mediators between people. Examples of HRI research illustrate these ideas.

36.1 Background

Human-Robot Interaction (HRI) is a relatively young discipline that has attracted a lot of attention over the past few years due to the increasing availability of complex robots and people's exposure to such robots in their daily lives, e.g. as robotic toys or, to some extent, as household appliances (robotic vacuum cleaners or lawn movers). Also, robots are increasingly being developed for real world application areas, such as robots in rehabilitation, eldercare, or robots used in robot-assisted therapy and other assistive or educational applications.

This article is not meant to be a review article of HRI per se, please consult e.g. (Goodrich and Schultz, 2007; Dautenhahn, 2007a) for such surveys and discussions of the history and origins of this field. Instead, I would like to discuss a few key issues within the domain of HRI that often lead to misunderstandings or misinterpretations of research in this domain. The chapter will not dwell into technical details but focus on interdisciplinary aspects of this research domain in order to inspire innovative new research that goes beyond traditional boundaries of established disciplines.

Researchers may be motivated differently to join the field HRI. Some may be roboticists, working on developing advanced robotic systems with possible real-world applications, e.g. service robots that should assist people in their homes or at work, and they may join this field in order to find out how to handle situations when these robots need to interact with people, in order to increase the robots' efficiency. Others may be psychologists or ethologists and take a human-centred perspective on HRI; they may use robots as tools in order to understand fundamental issues of how humans interact socially and communicate with others and with interactive artifacts. Artificial Intelligence and Cognitive Science researchers may join this field with the motivation to understand and develop complex intelligent systems, using robots as embodied instantiations and testbeds of those.

Last but not least, a number of people are interested in studying the interaction of people and robots, how people perceive different types and behaviours of robots, how they perceive social cues or different robot embodiments, etc. The means to carry out this work is usually via 'user studies'. Such work has often little technical content; e.g. it may use commercially available and already fully programmed robots, or research prototypes showing few behaviours or being controlled remotely (via the Wizard-of-Oz approach whereby a human operator, unknown to the participants, controls the robot), in order to create very constrained and controlled experimental conditions. Such research strongly focuses on humans' reactions and attitudes towards robots. Research in this area typically entails large-scale evaluations trying to find statistically significant results. Unfortunately this area of 'user studies', which is methodologically heavily influenced by experimental psychology and human-computer interaction (HCI) research, is often narrowly equated with the field of "HRI". "Shall we focus on the AI and technical development of the robot or shall we do HRI"? is not an uncommon remark heard in research discussions. This tendency to equate HRI with 'user studies ' is in my view very unfortunate, and it may in the long run sideline HRI and transform this field into a niche-domain. HRI as a research domain is a synthetic science, and it should tackle the whole range of challenges from technical, cognitive/AI to psychological, social, cognitive and behavioural.

36.2 HRI - a synthetic, not a natural science

HRI is a field that has emerged during the early 1990s and has been characterized as:

"Human—Robot Interaction (HRI) is a field of study dedicated to understanding, designing, and evaluating robotic systems for use by or with humans "

(Goodrich and Schultz, 2007, p. 204).

What is Human-robot interaction (HRI) and what does it try to achieve?

"The HRI problem is to understand and shape the interactions between one or more humans and one or more robots"

(Goodrich and Schultz, 2007, p. 216).

The characterization of the fundamental HRI problem given above focuses on the issues of understanding what happens between robots and people, and how these interactions can be shaped, i.e. influenced, improved towards a certain goal etc.

The above view implicitly assumes a reference point of what is meant by "robot". The term is often traced back to the Czechoslovakian word robota (work), and its first usage is attributed to Karel Capek's play R.U.R.: Rossum's Universal Robots (1920). However, the term "robot" is far from clearly defined. Many technical definitions are available concerning its motor, sensory and cognitive functionalities, but little is being specified about the robot's appearance, behaviour and interaction with people. As it happens, if a non-researcher interacts with a robot that he or she has never encountered before, then what matters is how the robot looks, what it does, and how it interacts and communicates with the person. The 'user' in such a context will not care much about the cognitive architecture that has been implemented, or the programming language that has been used, or the details of the mechanical design.

Behaviours and appearances of robots have dramatically changed since the early 1990s, and they continue to change — new robots appearing on the market, other robots becoming obsolete. The design range of robot appearances is huge, ranging from mechanoid (mechanical-looking) to zoomorphic (animal-looking robots) to humanoid (human-like) machines as well as android robots at the extreme end of human-likeness. Similarly big is the design space of robot appearance, behaviour and their cognitive abilities. Most robots are unique designs, their hardware and often software may be incompatible with other robots or even previous versions of the same robot. Thus, robots are generally discrete, isolated systems, they have not evolved in the same way as natural species have evolved, they have not adapted during evolution to their environments. When biological species evolve, new generations are connected to the previous generations in non-trivial ways; in fact, one needs to know the evolutionary history of a species in order to fully appreciate its morphology, biology, behaviour and other features. Robots are designed by people, and are programmed by people. Even for robots that are learning, they have been programmed how and when to learn. Evolutionary approaches to robots' embodiment and control (Nolfi and Floreano, 2000; Harvey et al., 2005) and developmental approaches to the development of a robot's social and cognitive abilities (Lungarella et al., 2003; Asada et al., 2009; Cangelosi et al., 2010; Vernon et al., 2011; Nehaniv et al., 2013) may one day create a different situation, but at present, robots used in HRI are human-designed systems. This is very different from ethology, experimental psychology etc. which study biological systems. To give an example, in 1948 Edward C. Tolman wrote his famous article "Cognitive Maps in Rats and Men". Still today his work is among the key cited articles in research on navigation and cognitive maps in humans and other animals. Rats and people are still the same two species; they have since 1948 not transformed into completely different organisms, results gained in 1948 can still be compared with results obtained today. In contrast, the robots that were available in the early 1990s and today's robots do not share a common evolutionary history; they are just very different robotic 'species'.

Thus, what we mean by 'robot' today will be very different from what we mean by 'robot' in a hundreds of year time. The concept of robot is a moving target, we constantly reinvent what we consider to be 'robot'. Studying interactions with robots and gaining general insights into HRI applicable across different platforms is therefore a big challenge. Focusing only on the 'H' in HRI, 'user studies' , i.e. the human perspective, misses the important 'R', the robot component, the technological and robotics characteristics of the robot. Only a deep investigation of both aspects will eventually illuminate the illusive 'I', the interaction that emerges when we put people and interactive robots in a shared context. In my perspective, the key challenge and characterization of HRI can be phrased as follows:

"HRI is the science of studying people's behaviour and attitudes towards robots in relationship to the physical, technological and interactive features of the robots, with the goal to develop robots that facilitate the emergence of human-robot interactions that are at the same time efficient (according to the original requirements of their envisaged area of use), but are also acceptable to people, and meet the social and emotional needs of their individual users as well as respecting human values".

36.3 HRI - methodological issues

As discussed in the previous section, the concept of 'robot' is a moving target. Thus, different from the biological sciences, research in HRI is suffering from not being able to compare results directly from studies using different types of robots. Ideally, one would like to carry out every HRI experiments with a multitude of robots and corresponding behaviours — which is practically impossible.

Let us consider a thought experiment and assume our research question is to investigate how a cylindrically shaped mobile robot should approach a seated person and how the robot's behaviour and appearance influences people's reactions. The robot will be programmed to carry a bottle of water, approach the person from a certain distance, stop at a certain distance in the vicinity of the person, orient its front (or head) towards the person and say "Would you like a drink?". Video cameras record people's reactions to the robot, and after the experiment they complete a questionnaire on their views and experiences of the experiment. Note, there is in fact no bi-directional interaction involved, the person is mainly passive. The scenario has been simplified this way to be able to test different conditions. We only consider three values for each category, i.e. no continuous values. Despite these gross simplifications, as indicated in table 1 below, we will end up with 37 = 2187 combinations and possible experimental conditions to which we may expose participants to. For each condition we need a number, X, of participants, in order to satisfy statistical constraints. Each session, if kept to a very minimal scenario, will take at least 15 minutes, plus another 15 minutes for the introduction, debriefing, questionnaires/interviews, as well as signing of consent forms etc. Note, more meaningful HRI scenarios, e.g. those we conduct in our Robot House described below, typically involve scheduling one full hour for each participant per session. Since people's opinions of and behaviours towards robots is likely to change in long-term interactions, each person should be exposed to the same condition 5 times, which gives 10935 different sessions. Also, the participants need to be chosen carefully, ideally one would also consider possible age and gender differences,as well as personality characteristics and other individual differences — which means repeating the experiment with different groups of participants. Regardless of whether we expose one participant to all conditions, or we choose different participants for each condition, getting sufficient data for meaningful statistical analysis will clearly be impractical. We end up with about 328050 * X minutes required for the experiment, not considering situations where the experiment has to be interrupted due to a system's failure, rescheduling of appointments for participants etc. Clearly, running such an experiment is impractical, and not desirable, given that only minimal conditions are being addressed, so results from this experiment would necessarily be very limited and effort certainly not worthwhile.

Features | |||

Height | 2m | 1m | 50cm |

Speed | Fast | medium | slow |

Voice | Human-like | Robot-like | none |

Colour of body | Red | blue | white |

Approach distance to person | Close | medium | far |

Approach direction to person | Frontal approach | Side approach | Side-back approach |

Head | Head with human-like features | Mechanical head | No head |

... |

Table 36.1: HRI thought experiment.

Given these constraints, a typical HRI experiment simplifies to an even greater extent. The above study could limit itself to a short and tall robot and two different approach distances, resulting in 4 experimental conditions. The results would indicate how robot height influences people's preferred approach distances but only in a very limited sense, since all other features would have to be held constant, i.e. the robot's appearance (apart from height), speed, voice, colour, approach direction, head feature, etc. would be chosen once and then kept constant for the whole experiment. Thus, any results from our hypothetical experiment would not allow us to extrapolate easily to other robot designs and behaviour, or other user groups. Robots are designed artifacts, and they are a moving target; what we consider to be a typical 'robot' today will probably be very different from what people in 200 years consider to be a "robot". So will the results we have gained over the past 15 or 20 years still be applicable to tomorrow's robots?

As I have pointed out previously (Dautenhahn, 2007b) HRI is often compared to other experimental sciences, such as ethology and in particular experimental, or even clinical psychology. And indeed, quantitative methods used in these domains often provide valuable guidelines and sets of established research methods that are used to design and evaluate HRI experiments, typically focusing on quantitative, statistical methods requiring large-scale experiments, i.e. involving large sample sizes of participants, and typically one or more control conditions. Due the nature of this work the studies are typically short-term, exposing participants to a particular condition only once or a few times. Textbooks on research methods in experimental psychology can provide guidelines for newcomers to the field. However, there is an inherent danger if such approaches are taken as the gold standard for HRI research, i.e. if any HRI study is measured against it. This is very unfortunate since in fact, many methodological approaches exist that provide different, but equally valuable insights into human-robot interaction. Such qualitative methods may include in-depth, long-term case studies where individual participants are exposed to robots over an extensive period of time. The purpose of such studies is more focused on the actual meaning of the interaction, the experience of the participants, any behavioural changes that may occur and changes in participants' attitudes towards the robots or the interaction. Such approaches often lack control conditions but analyse in great detail interactions over a longer period of time. Other approaches, e.g. conversation-analytic methods (Dickerson et al., 2013; Rossano et al., 2013) may analyse in depth the detailed nature of the interactions and how interaction partners respond and attend to each other and coordinate their actions.

In the field of assistive technology and rehabilitation robotics, where researchers develop robotic systems for specific user groups, control conditions with different user groups are usually not required: if one develops systems to assist or rehabilitate people with motor impairments after a stroke, design aids to help visually impaired people, or develop robotic technology to help with children with autism learn about social behaviour and communication, contrasting their use of a robotic system with how healthy/neurotypical people may use the same system does not make much sense. We already know about the specific impairments of our user groups, and the purpose of such work is not to highlight again how they differ from healthy/neurotypical people. Also, often the diversity of responses within the target user group is of interest. Thus, in this domain, control groups only make sense if those systems are meant to be used for different target user groups, and so comparative studies can highlight how each of them would use and could benefit (or not) from such a system. However, most assistive, rehabilitative systems are especially designed for people with special needs, in which case control conditions with different user groups are not necessarily useful.

Note, an important part of control conditions in assistive technology is to test different systems or different versions of the same system in different experimental conditions. Such comparisons are important since they a) allow gaining data to further improve the system, and b) can highlight the added value of an assistive system compared to other conventional systems or approaches. For example, Werry and Dautenhahn (2007) showed that an interactive, mobile robot engages children with autism better than a non-robotic conventional toy.

A physician or physiotherapist may use robotic technology in order to find out about the nature of a particular medical condition or impairment, e.g. to find out about the nature of motor impairment after stroke, and may use an assessment robot to be tested with both healthy people and stroke patients. Similarly, a psychologist may study the nature of autism by using robotic artefacts, comparing,e.g. how children respond to social cues, speech or tactile interaction. Such artifacts would be tools in the research on the nature of the disorder or disability, rather than an assistive tool built to assist the patients — which means it would also have to take into consideration the patient's individual differences, likes and dislikes and preferences in the context of using the tool.

Developing complex robots for human-robot interaction requires substantial amount of resources in terms of researchers, equipment, know-how, funding and it is not uncommon that the development of such a robot may take years until it is fully functioning. Examples of this are the robot 'butler' Care-O-bot® 3 (Parlitz et al, 2008; Reiser et al., 2013, cf. Fig. 1) whose first prototype was first developed as part of the EU FP6 project COGNIRON (2004-2008), or the iCub robot (Metta et al. 2010,Fig. 2) developed from 2004-2008 as part of the 5.5-year FP6 project Robotcub. Both robots are still under development and upgraded regularly. The iCub was developed as a research platform for developmental and cognitive robotics by a large consortium, concluding several European partners developing the hardware and software of the robot. Another example is the IROMEC platform that was developed from 2006-2009 as part of the FP6 project IROMEC , Fig. 3. The robot has been developed as a social mediator for children with special needs who can learn through play. Results of the IROMEC project do not only include the robotic platform, but also a framework for developing scenarios for robot-assisted play (Robins et al., 2010), and a set of 12 detailed play scenarios that the Robot-Assisted Therapy (RAT) community can use according to specific developmental and educational objectives for each child (Robins et al., 2012). In the IROMEC project a dedicated user-centred design approach was taken (Marti and Bannon, 2009; Robins et al. 2010), however time ran out at the end of the project to do a second design cycle in order to modify the platform based on trials with the targeted end-users. Such modifications would have been highly desirable, since interactions between users and new technology typically illuminate issues that have not been considered initially. In the case of the iCub the robot was developed initially as a new cognitive systems research robotics platform, so no concrete end users were envisaged. In the case of the Care-O-bot® three professional designers were involved in order to derive a 'friendly' design (Parlitz et al., 2008).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.1 A-B: The Care-O-bot® 3 robot in the UH Robot House, investigating robot assistance for elderly users as part of the ACCOMPANY project (2011, ongoing). See a video (http://www.youtube.com/watch?v=qp47BPw__9M). The Robot House is based off-campus in a residential area, and is a more naturalistic environment for the study of home assistance robots than laboratory settings, cf. Figure 6. Bringing HRI into natural environments poses many challenges but also opportunities (e.g. Sabanovic et al. 2006; Kanda et al., 2007; Huttenrauch et al. 2009; Kidd and Breazeal, 2008; Kanda et al. 2010; Dautenhahn, 2007; Woods et al., 2007; Walters et al., 2008).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.2: The iCub (2013) humanoid open course platform, developed as part of the Robotcub project (2013).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.3: The IROMEC robot which was developed as part of the IROMEC project (2013).

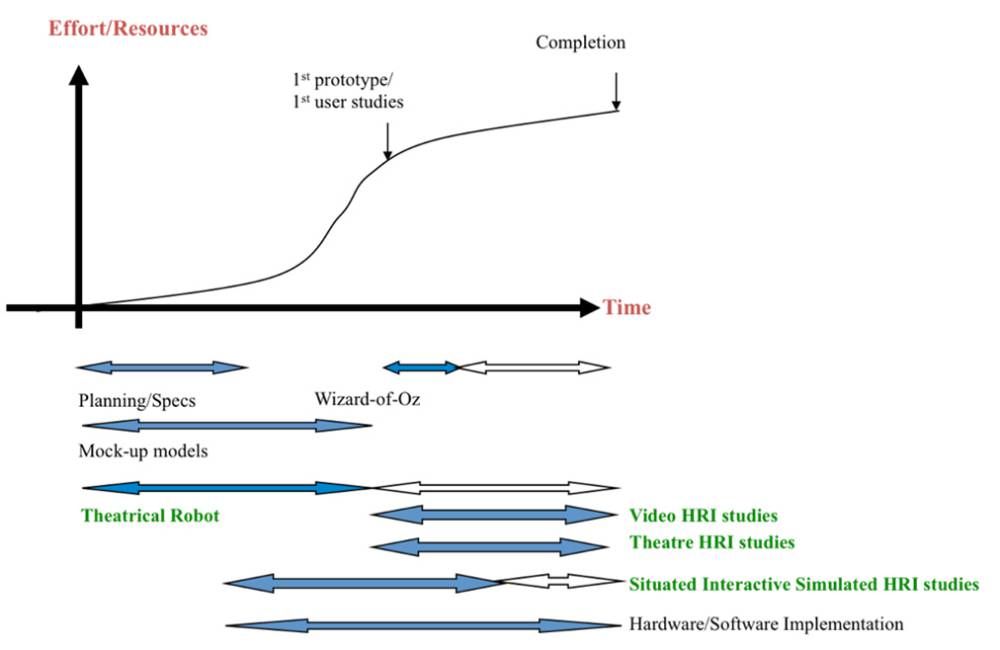

Thus, designing robots for HRI 'properly', i.e. involving users in the design and ensuring that the to be developed robot fulfills its targeted roles and functions and provides positive user experience remains a difficult task (Marti and Bannon, 2009). A number of methods are thus used to gain input and feedback from users before the completion of a fully functioning robot prototype, see Fig. 4. Fig. 5 provides a conceptual comparison of these different prototyping approaches and experimental paradigms.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.4: Modified from Dautenhahn (2007b), sketching a typical development time line of HRI robots and showing different experimental paradigms. The dark arrows indicate that for those periods the particular experimental method is more useful than during other periods. Note, there are typically several iterations in the development process (not shown in the diagram), since systems may be improved after feedback from user studies with the complete prototype. Also, several releases of different systems may result, based on feedback from deployed robots after a first release to the user/scientific community.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

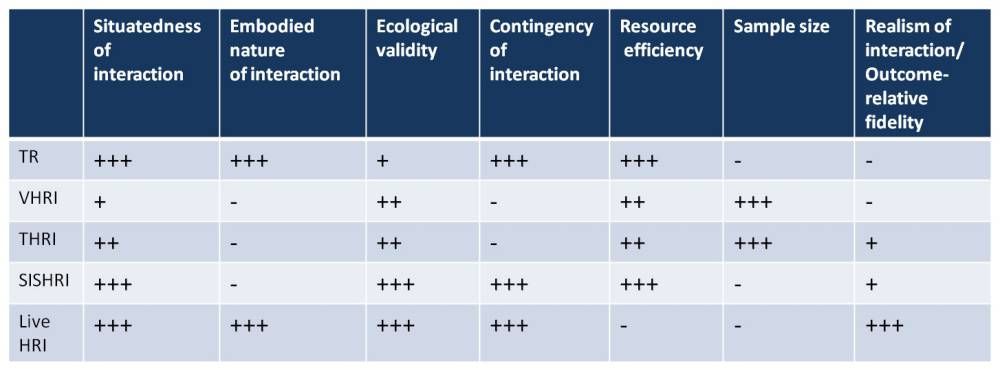

Figure 36.5: Conceptual Comparison of Different Experimental Paradigms discussed in this chapter. TR (Theatrical Robot), VHRI (Video-based HRI), THRI (Theatre-based HRI), SISHIR (Situated Interactive Simulated HRI), Live HRI. Resource efficiency means that experiments need to yield relevant results quickly and cheaply (in terms of effort, equipment required, person months etc.). Outcome-relative fidelity means that outcomes of the study must be sufficiently trustworthy and accurate to support potentially costly design decisions taken based on the results (Derbinsky et al. 2013).

Even before a robot prototype exists, in order to support the initial phase of planning and specification of the system, mock-up models might be used, see e.g. Bartneck and Jun 2004. Once a system's main hardware and basic control software has been developed, and safety standards are met, first interaction studies with participants may begin.

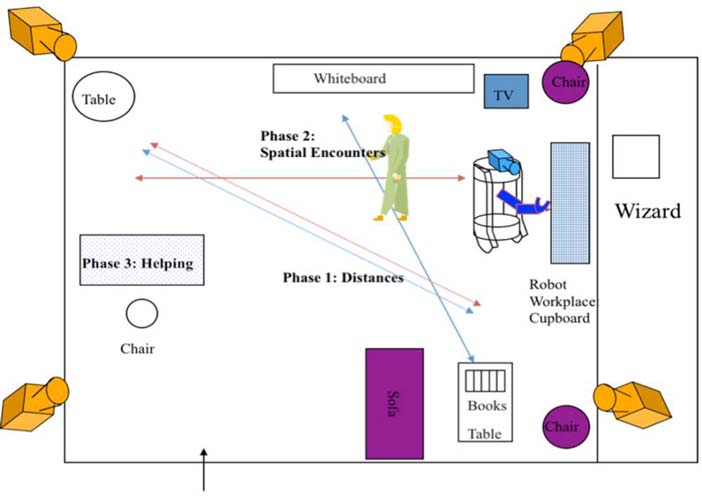

The above mentioned Wizard-of-Oz technique (WoZ) is a popular evaluation technique that originated in HCI (Gould et al, 1983; Dahlback et al., 1993; Maulsby et al,. 1993) and is now widely used in HRI research (Green et al. 2004, Koay et al., 2008; Kim et al., 2012). In order to carry out WoZ studies, a prototype version must be available that can be remotely controlled, unknown to the participants. Thus, WoZ is often used in cases where the robot's hardware has been completed but the robot's sensory, motor or cognitive abilities are still limited. However, having one or two researchers remotely controlling the robot's movements and/or speech can be cognitively demanding and impractical in situations where the goal is that the robot eventually should operate autonomously. For example, in a care, therapy or educational context, remotely controlling a robot require another researcher and/or care staff member to be available (cf. Kim et al., 2013). WoZ can be used for full teleoperation or for partial control, e.g. to simulate the high-level decision-making progress of the robot. See Fig. 6 for an example of an HRI experiment using WoZ.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.6: a) Two researchers controlling movement and speech of a robot used in a (simulated) home companion environment (b). 28 subjects interacted with the robot in physical assistance tasks (c), and they also had to negotiate space with the robot (d), e) layout of experimental area for WoZ study. The study was performed in 2004 as part of the EU project COGNIRON. Dautenhahn (2007a), Woods et al. (2007), Koay et al. (2006) provide some results from these human-robot interaction studies using a WoZ approach.

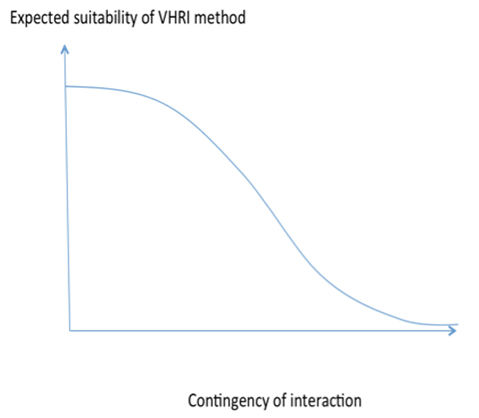

Once WoZ experiments are technically feasible, video-based methods can be applied whereby typically groups of participants are shown videos of the robots interacting with people and their environments. The VHRI (Video-based HRI) methodology has been used successfully in a variety of HRI studies (Walters et al., 2011; Severinson-Eklund, 2011; Koay et al. 2007, 2011; Syrdal et al., 2010; Lohse et al., 2008; Syrdal et al., 2008). Previous studies compared live HRI and video-based HRI and found comparable results in a setting where a robot approached a person (Woods et al., 2006a,b). However, in the scenarios that were used for the comparative study there was little dynamic interaction and co-ordination between the robot's and the person's behaviour. It can be expected that the higher the contingency and co-ordination between human and robot interaction, the less likely VHRI is to simulate live interaction experience (cf. Figure 7).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.7: Illustration of decrease of suitability of the Video HRI method with increasing contingency of the interaction (e.g. verbal or non-verbal coordination among the robot and the human in interaction).

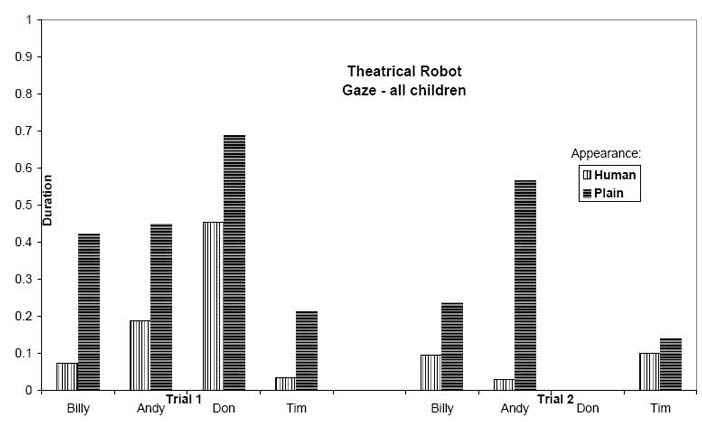

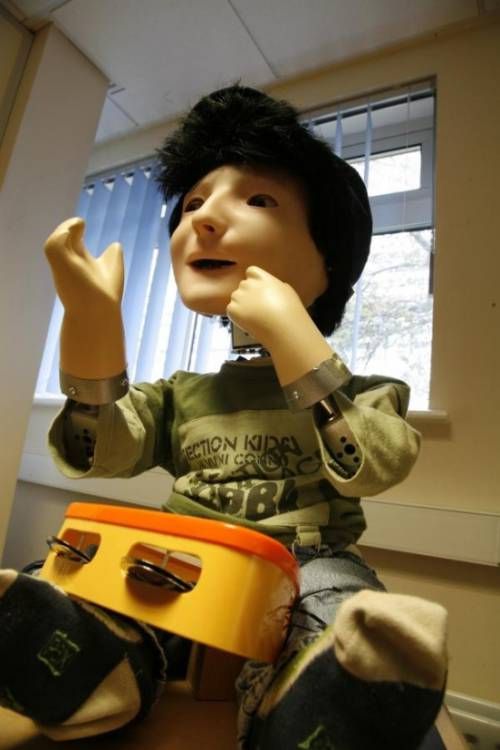

Another prototyping method that has provided promising results is the Theatrical Robot (TR) method that can be used in instances where a robot is not yet available, but where live human—robot interaction studies are desirable, for example see Fig. 8. The Theatrical Robot describes a person (a professional such as an actor, or mime artist) dressed up as a robot and behaving according to a specific and pre-scripted robotic behaviour repertoire. Thus, the Theatrical Robot can serve as a life-sized, embodied, simulated robot that can simulate human-like behaviour and cognition. Robins et al. (2004) have used this method successfully in studies which tried to find out how children with autism react to life-sized robots, and how this reaction depends on whether the robot looks like a person or looks like a robot. The small group of four children studied showed strong initial preferences for the Theatrical Robot in its robotic appearance, compared to the Theatrical Robot showing the same (robotic) behaviour repertoire but dressed as a human being, see example results in Figure 8. Note, in both conditions the 'robot' was trained to not to respond to the children. In the Robins et al. (2004) study a mime artist was used in order to ensure that the TR was able to precisely and reliable control his behaviour during the trials.

The Theatrical Robot paradigm allows us to conduct user studies from an very early phase of planning of the robotic system. Once working prototypes exist the TR method is less likely to be useful since now studies can be run with a 'real' system. However, the TR can also be used as a valuable method on its own, in terms of investigating how people react to other people depending on their appearance, or how people would react to a robot that looks and behaves very human-like. Building robots that truly look and behave like human beings is still a future goal, although Android robots can simulate appearance, they lack human-like movements, behaviour and cognition (MacDorman, Ishiguro, 2006). Thus, the TR can shortcut the extensive development process and allow us to make predictions of how people may react to highly human-like robots.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.8: Using the Theatrical Robot paradigm in a study that investigated children with autism's responses to a human-sized robot either dressed as a robot (plain appearance a), or as a human person (human appearance),showing identical behaviour in both conditions. In b, c, d responses of three children in both experimental conditions are shown. An example of results showing gaze behaviour of the children towards the TR is shown in e).

In addition to prototyping robots and human-robot interaction, a key problem in many HRI studies is the prototyping of scenarios. For example, in the area of developing home companion robots, researchers study the use of robots for different types of assistance, physical, cognitive and social assistance. This may include helping elderly users at home with physical tasks (e.g. fetch-and-carry), reminding users of appointment, events, or the need to take medicine (the robot as a cognitive prosthetic), or social tasks (encouraging people to socialize, e.g. call a friend or family member or visit a neighbor). Implementing such scenarios presents again a huge developmental effort, in particular when the robot's behaviour should be autonomous, and not fully scripted, but adapt to users' individual preferences and their daily life schedule. One way to prototype a scenario is to combine a WoZ method with robotic theatre performance in front of an audience. The Theatre-based HRI method (THRI) has provided valuable feedback into users' perception of scenarios involving e.g. home companion robots (Syrdal et al, 2011; Chatley et al., 2010). Theatre and drama has been used in Human-Computer Interaction to explore issues of the use of future technologies (see e.g. Iacuccui and Kuuti, 2002; Newell et al., 2006). In the context of HRI, THRI consists of a performance of actors on stage interacting with robots that are WoZ controlled, or semi-autonomously controlled. Subsequent discussions with the audience, and/or questionnaires and interviews are then used to study the audience's perception of the scenarios and the displayed technology. Discussions between the audience and the actors on stage (in character) is typically mediated by a facilitator. This method can reach larger audiences than individual HRI studies would provide, and can thus be very useful to prototype scenarios.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

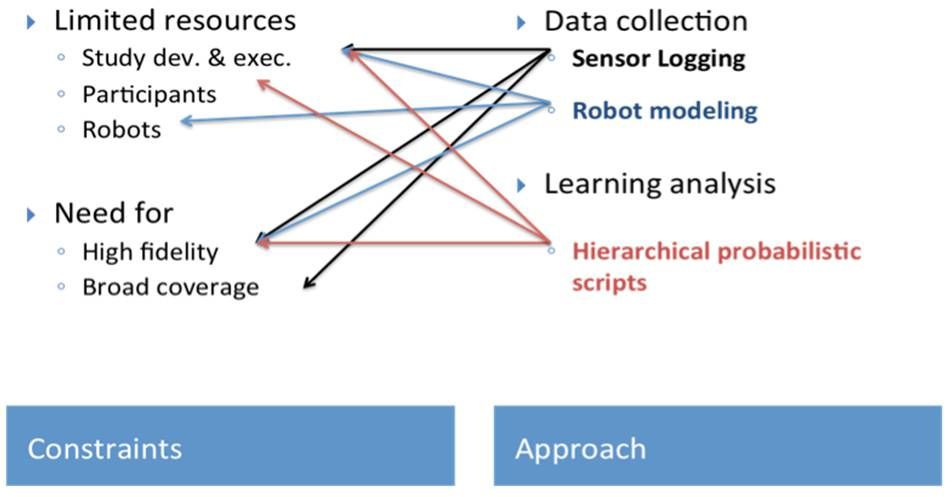

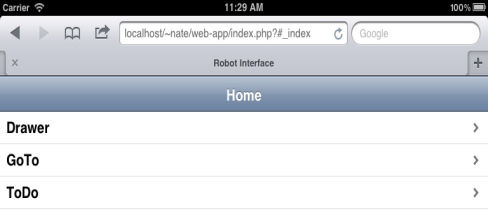

Figure 36.9 A-B-C: a) SISHRI methodological approach (Derbinsky et al., 2013) — situated, real-time HRI with a simulated robot to prototype scenarios, b) example of simulation of interaction shown on the tablet used by the participant. On the left, the homepage of the web application developed for rapid scenarios prototyping is shown. This demo version shows three actions that were implemented (Drawer, GoTo and ToDo): Drawer gave the user the possibility of opening and closing the robot´s drawer. GoTo is used to simulate the time that the robot will take to travel from one position to other (picture on the right), ToDo was introduced to expand the functionality of this prototype, the activities relate to the user, rather than the robot and can be logged in the system (e.g. drinking, eating, etc). On the right, the functionality GoTo is represented. In this example, the user can send the robot from the kitchen (current robot position) to any other place the user selects from the list (kitchen, couch, desk, drawer). In the picture, the user has chosen the kitchen.

Recently, a new resource efficient method for scenario prototyping has been proposed. A proof-of-concept implementation is described in Derbinsky et al. (2013). Here, an individual user, with the help of a handheld device, goes 'through the motions' of robot home assistance scenarios without an actual physical robot. The tablet computer simulates the robot's actions as embedded in a smart environment. The advantage of this method is that the situatedness of the interaction has been maintained, i.e. the user interacts in a real environment, in real time, with a simulated robot. This method, which can be termed SISHRI (Situated Interactive Simulated HRI) maintains the temporal and spatial aspects and the logical order of action sequences in the scenario, but omits the robot. It allows testing of acceptability and general user experience of complex scenarios, e.g. scenarios used for home assistance without requiring a robot. The system responds based on activities recognized via the sensor network and the input from the user via the user interface. The method is likely to be most useful to prototype complex scenarios before an advanced working prototype is available (see Fig. 9).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

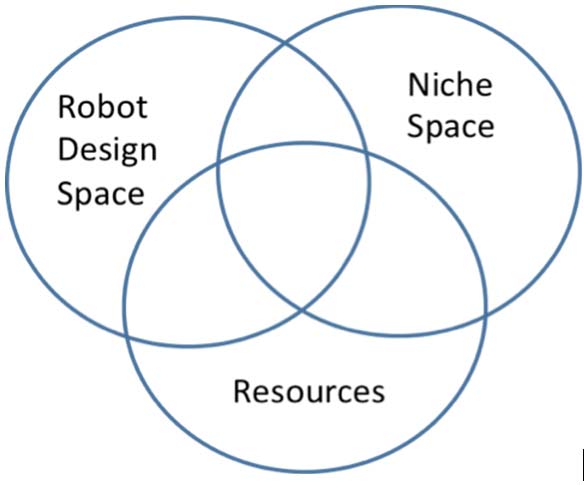

Figure 36.10: Illustrating the design space of robots. Shape and functionality are dependent on their application and use. a) KASPAR, the minimally expressive robot developed at University of Hertfordshire used for HRI studies including robot-assisted therapy for children with autism, b) Roomba (iRobot), a vaccuming cleaning robot shown operating in the University of Hertfordshire Robot House, c) Autom, the weight loss coach. Credit: Intuitive Automata, d) Pleo robot (Ugobe), designed as a 'care-receiving' robot encouraging people to develop a relationship with it e) Robosapien toy robot (WowWee), f) Design space — niche space — resources, see main text for explanation.

The development of any particular HRI study and the methodologies used need to consider the three key constraints shown in Fig. 10. The Robot Design Space comprises all the different possible designs in terms of robot behaviour and appearance. The Niche Space consists of the requirements for the robot and the human-robot interaction as relevant particular scenarios and application areas. The resources (in terms of time, funding, availability of participants etc.) need to be considered when selecting any particular method for HRI studies. Exhaustively exploring the design space is infeasible, so decisions need to be made carefully.

36.4 HRI - About (not) romanticizing robots

The present reality of robotics research is that robots are far from showing any truly human-like abilities in terms of physical activities, cognition or social abilities (in terms of flexibility, "scaling up" of abilities, "common sense", graceful degradation of competencies etc.). Nevertheless, in the robotics and HRI literature they are often portrayed as "friends", "partners", "co-workers", etc., all of which are genuinely human terms. These terms are rarely used in an operational sense, and few definitions exist—most often these terms are used without further reflection. Previously, I proposed a more formal definition of companion robots, i.e."A robot companion in a home environment needs to 'do the right things', i.e. it has to be useful and perform tasks around the house, but it also has to 'do the things right', i.e. in a manner that is believable and acceptable to humans" (Dautenhahn, 2007a, p. 683).

In contrast to the companion paradigm, where the robot's key function is to take care of the human's needs, in the caretaker paradigm it is the person's duty to take care of the 'immature' robot. In that same article I also argued that due to evolutionarily determined cognitive limits we may be constrained in how many "friends" we may make. When humans form relationships with people, this entails emotional, psychological and physiological investment. We would tend to make a similar investment towards robots, which do not reciprocate this investment. A robot will 'care' about us as much or as little as the programmers want it to. Robots are not people; they are machines. Biological organisms, but not robots, are sentient beings, they are alive, they have an evolutionary and developmental history, they have life-experiences that are shaping their behaviour and their relationships with the environment. In contrast, machines are neither alive nor sentient; they can express emotions , pretend to 'bond' with you, but these are simulations, not the real experiences that humans share. The 'emotions' of a humanoid robot may look human-like but the robot does not feel anything, and the expressions are not based on any experiential understanding. A humanoid robot which looks deeply into your eyes and mutters "I love you" — is running a programme. We may enjoy this interaction, in the way we enjoy role play or immersing ourselves in imaginary worlds, but one needs to be clear about the inherently mechanical nature of the interaction. As Sherry Turkle has pointed out, robots as 'relational artifacts' that are designed to encourage people to develop a relationship with them, can lead to misunderstandings concerning the authenticity of the interaction (Turkle, 2007). If children grow up with a robot companion as their main friend who the interact with for several hours each day, they will learn that they can just switch it off or lock it into a cupboard whenever it is annoying or challenging them. What concept of friendship will these children develop? Will they develop separate categories, e.g. 'friendship with a robot', 'friendship with pets' and 'friendship with people'? Will they apply the same moral and ethical concerns to robots, animals and people? Or will their notion of friendship, shaped by interactions with robots, spill over to the biological world? Similar issues are discussed in terms of children's possible addiction to computer games and game characters and to what extent these may have a negative impact on their social and moral development. Will people who grow up with a social robot view it as a 'different kind', regardless of its human or animal likeness? Will social robots become new ontological categories (cf. Kahn et al. 2004; Melson et al. 2009)? At present such questions cannot be answered, they will require long-term studies into how people interact with robots, over years or decades — and such results are difficult to obtain and may be ethically undesirable. However, robotic pets for children and robotic assistants for adults are becoming more and more widespread, so we may get answers to these questions in the future. The answers are unlikely to be 'black and white' — similar to the question of whether computer games are beneficial for children's cognitive, academic and social development, where answers are inconclusive (Griffiths, 2002; Kierkegaard, 2008; Dye et al., 2009; Anderson et al., 2010; Jackson et al. 2011).

Humans have been fascinated by autonomous machines throughout history, so the fascination with robots, what they are and what they can be, will stay with us for a long time to come. However, it is advisable to have the discussion on the nature of robots based on facts and evidence, and informed predictions, rather than pursuing a romanticizing fiction.

36.5 HRI - there is no such thing as 'natural interaction'

A widespread assumption within the field of HRI is that 'good' interaction with a robot must reflect natural (human-human) interaction and communication as closely as possible in order to ease people's need to interpret the robot's behaviour. Indeed, people's face-to-face interactions are highly dynamic and multi-modal — involving a variety of gestures, language (content as well as prosody are important), body posture, facial expressions, eye gaze, in some contexts tactile interactions, etc. This has lead to intensive research into how robots can produce and understand gestures, how they can understand when being spoken to and respond correspondingly, how robots can use body posture, eye gaze and other cues to regulate the interaction, and cognitive architectures are being developed to provide robots with natural social behaviour and communicative skills (e.g. Yamaoka et al. 2007; Shimada and Kanda, 2012; Salem, 2012; Mutlu et al., 2012). The ultimate goal inherent in such work is to create human-like robots, which look human-like and behave in a human-like manner. While we discuss below in more detail that the goal of human-like robots needs to be reflected upon critically, the fundamental assumption of the existence of 'natural' human behaviour is also problematic. What is natural behaviour to begin with? Is a person behaving naturally in his own home, when playing with his children, talking to his parents, going to a job interview, meeting colleagues, giving a presentation at a conference? The same person behaves differently in different contexts and at different times during their lifetime. Were our hunter-gatherer ancestors behaving naturally when trying to avoid big predators and finding shelter? If 'natural' is meant to be 'biologically realistic' then the argument makes sense — a 'natural gesture' would then be a gesture using a biological motion profile and an arm that is faithfully modeling human arm morphology. Similarly, a natural smile would then try to emulate the complexity of human facial muscles and emotional expressions. However, when moving up from the level of movements and actions to social behaviour, the term 'natural' is less meaningful. To give an example, how polite shall a robot be? Humans show different behaviour and use different expressions in situations where we attend a formal work dinner, or are having a family dinner at home. As humans, we may have many different personal and professional roles in life, e.g. daughter/son, sibling, grandmother, uncle, spouse, employee, employer, committee member, volunteer, etc. We will behave slightly differently in all these different circumstances, from the way we dress, speak, behave, what we say and how we say it, it influences our style of interaction, the manner we use tactile interaction, etc. We can seamlessly switch between these different roles, which are just different aspects of 'who we are' — as expressions of our self or our 'centre of narrative gravity' as it has been phrased by Daniel Dennett. People can deal with such different situations since we continuously re-construct the narratives of our (social) world (Dennett, 1989/91; see also Turner, 1996).

"Our fundamental tactic of self-protection, self-control, and self-definition is not building dams or spinning webs, but telling stories - and more particularly concocting and controlling the story we tell others - and ourselves - about who we are.

These strings or streams of narrative issue forth as if from a single source - not just in the obvious physical sense of flowing from just one mouth, or one pencil or pen, but in a more subtle sense: their effect on any audience or readers is to encourage them to (try to) posit a unified agent whose words they are, about whom they are: in short, to posit what I call a center of narrative gravity

(Dennett, 1989/91)."

Thus, for humans, behaving 'naturally' is more than having a given or learnt behaviour repertoire and making rational decisions in any one situation on how to behave. We are 'creating' these behaviours, reconstructing them, taking into consideration the particular context, interaction histories, etc., we are creating behaviour consistent with our 'narrative self'. For humans, such behaviour can be called 'natural'.

What is 'natural' behaviour for robots? Where is the notion of 'self', their 'centre of narrative gravity'? Today's robots are machines, they may have complex 'experiences' but these experiences are no different from those of other complex machines. We can program them to behave differently in different contexts, but from their perspective, it does not make any difference whether they behave one way or the other. They are typically not able to relate perceptions of themselves and their environment to a narrative core, they are not re-creating, but rather recalling, experience. Robots do not have a genuine evolutionary history, their bodies and their behaviour (including gestures etc.) have not evolved over many years as an adaptive response to challenges in the environment. For example, the shape of our human arms and hands has very good 'reasons', it goes back to the design of forelimbs of our vertebrate ancestors, used first for swimming, then as tetrapods for walking and climbing, later bipedal postures freed the hands to grasp and manipulate objects, to use tools, or to communicate via gestures. The design of our arms and hands is not accidental, and is not 'perfect' either. But our arms and hands embody an evolutionary history of adaptation to different environmental constraints. In contrast, there is no 'natural gesture' for a robot, in the same way as there is no 'natural' face or arm for a robot.

To conclude, there appears to be little argument to state that a particular behaviour X is natural for a robot Y. Any behaviour of a robot will be natural or artificial, solely depending on how the humans interacting with the robot perceive it. Thus, naturalness of robot behaviour is in the eyes of the beholder, i.e. the human interacting with or watching the robot; it is not a property of the robot's behaviour itself.

36.6 HRI - new roles

While more and robotic systems can be used in 'the wild' (Sabanovic et al., 2006; Salter et al., 2010) researchers have discussed different roles for such robots.

Previously,I proposed different roles of robots in human society (Dautenhahn, 2003), including:

a machine operating without human contact;

a tool in the hands of a human operator;

a peer as a member of a human—inhabited environment;

- a robot as a persuasive machine influencing people's views and/or behaviour (e.g. in a therapeutic context);

a robot as a social mediator mediating interactions between people;

a robot as a model social actor.

Dautenhahn et al.(2005) investigated people's opinions on viewing robots as friends, assistants or butlers. Others have discussed similar roles of robots and humans,e.g. humans can assume the role of a supervisor, an operator, a mechanic, a peer, or a bystander (Scholtz, 2003). Goodrich and Schultz (2007) have proposed roles for a robot as a mentor for humans or information consumer whereby a human uses information provided by a robot. Other roles that have been discussed recently are robots as team member in collaborative tasks (Breazeal et al. 2004), robots as learners (Thomaz and Breazeal, 2008; Calignon et al., 2010; Lohan et al., 2011), and robots as cross-trainers in HRI teaching contexts (Nikolaidis & Shal, 2013). Teaching robots movements, skills and language in interaction and/or by demonstration is a very active area of research (e.g. Argall et al. 2009; Thomaz and Cakmak 2009; Konidaris et al., 2012; Lyon et al. 2012; Nehaniv et al., 2013), however, it remains a challenge on how to teach in natural, unstructured and highly dynamic environments. For humans and some other biological species social learning is a powerful tool for learning about the world and each other, to teach and develop culture, and it remains a very interesting challenge for future generations of robots learning in human-inhabited environment.(Nehaniv & Dautenhahn, 2007). Ultimately, robots that can learn flexibly, efficiently, and socially appropriate behaviours that enhance its own skills and performance and is acceptable for humans interacting with the robot, will have to develop suitable levels of social intelligence (Dautenhahn, 1994, 1995, 2007a).

36.7 Robots as Service Providers

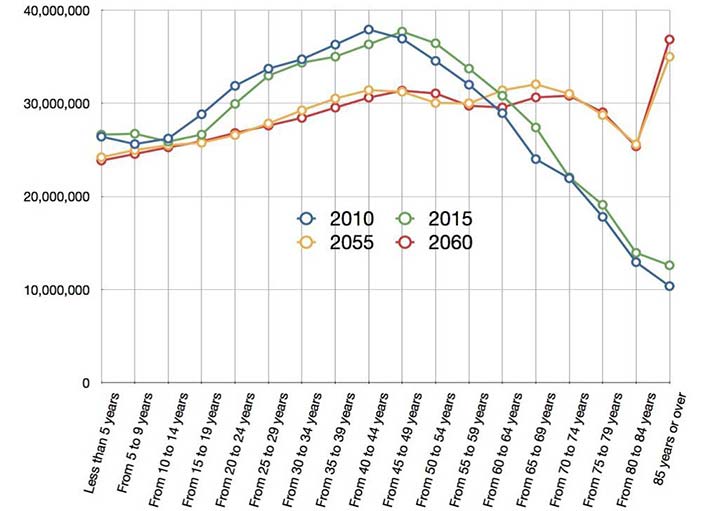

A lot of research in intelligent, autonomous robots has focused on how the robots could provide services (assistive or otherwise) that originally people performed. Robots replaced many workers at the factory assembly lines, and more recently robots have been discussed e.g. in the context of providing solutions to care for elderly people in countries with rapidly changing demographics (see Fig. 11). In many scenarios, robots are meant to work alongside people, and to replace some tasks that previously humans performed.

Recently, a number of projects worldwide investigate the use of robots in elder-care in order to allow users to live independently in their homes for as long as possible see e.g. Heylen et al. (2012), Huijnen et al. (2011). Such research poses many technological, ethical and user-related challenges, for examples of such research projects see Fig. 6 for HRI research on home companions in the COGNIRON project (2004-2008), Fig. 12 for research in the LIREC project (2008-2012), and Fig. 1 for social and empathic home assistance in a smart home as part of the above mentioned ACCOMPANY project. Many such projects use a smart home environment, e.g. the University of Hertfordshire Robot House which is equipped with dozens of sensors. Success in this research domain will depend on acceptability, not only by the primary users of such systems (elderly people) but also by other users (family, friends, neighbours) including formal and informal carers. Thus, taking into consideration the 'human component' is important for such projects. See Amirabdollahian et al. (2013) for a more detailed discussion of the objectives and approaches taken in the ACCOMPANY project.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.11: Population projections for the 27 Member States, showing an increase of people aged 65 and above from 17.57% to 29.54%, with a decrease of people aged between 15-64 from 67.01% to 57.42%. Diagram taken with permission from Amirabdollahian et al. (2013).

Note, the domain of robots for elder-care poses many ethical challenges (see e.g. Sharkey and Sharkey, 2011, 2012), and the investigation of these issues is indeed one of the aims of the ACCOMPANY project. In the following I like to provide some personal thoughts on some of these matters. Often robots are envisaged as providing company and social contact, stimulation, motivation, and also facilitating communication among e.g. residents in a care home; see many years of studies with the seal robot PARO (Wada and Shibata, 2007; Shibata et al. 2012). Indeed, care staff has often very little time (typically in the range of a few minutes per day per person), for social contact. So care providers may show a great interest in using robots for social company, and elderly people might welcome such robots as a means to combat their loneliness. However, as I have argued above, interactions with robots are inherently mechanical in nature; robots do not reciprocate love and affection, they can only simulate those. Thus, human beings are and will remain the best experts on providing social contact and company, experiencing and expressing empathy, affection, and mutual understanding. While it is difficult to design robots that can do the more practical tasks that dominate the work day of care staff, e.g. cleaning, feeding, washing elderly people, robots may be designed to fulfill those tasks, potentially freeing up care staff to provide social contact with genuine, meaningful interactions. Unfortunately, it is technically highly challenging to build robots that can actually provide such tasks, although it is an active area of research (cf. the RI-MAN robot and Yamazaki et al., 2012), while it is well within our reach to build robots that provide some basic version of company and social interaction, 'relational artifacts' according to Turkle et al. (2006), that already exist today. If one day robots are able to provide both social and non-social aspects of care, will human care staff become obsolete due to the need of cutting costs in elder-care? Or will robots be used to do the routine work and the time of human carers will be freed to engage with elderly residents in meaningful and emotionally satisfying ways? The latter option would not only be more successful in providing efficient and at the same time humane care, it would also acknowledge our biological roots, emotional needs, and evolutionary history—as a species, our social skills are the one domain where we typically possess our greatest expertise, while our 'technical/mechanical' expertise can be replaced more easily by machines.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.12: The Sunflower robot developed by Dr. Kheng Lee Koay at the University of Hertfordshire. Based on a Pioneer mobile platform (left), a socially interactive and expressive robot was developed for the study of assistance scenarios for a robot companion in a home context.

An example of a robot designed specifically for home assistance is the Sunflower robot illustrated in Figure 12. It consists of a mobile base, a touch-screen user interface and diffuse LED display panels to provide expressive multi-coloured light signals to the user. Other expressive behaviours include sound, base movement, and movements of the robot's neck. The non-verbal expressive behaviours have been inspired by expressive behaviour that dogs display in human-dog interaction in similar scenarios as those used in the Robot House, in collaboration with ELTE in Hungary (Prof. Ádám Miklósi's group). The robot possesses some human-like features (a head, arms) but its overall design is non-humanoid. This design follows our previous research results showing that mechanoid (mechanically-looking) robots are well accepted by users with different individual preferences. The robot's expressive behaviour (light, sound, movements) has been inspired by how dogs interact with their owners (Syrdal et al., 2010; Koay et al., 2013). a) early Sunflower prototype, b,c) Sunflower, d) HRI home assistance scenarios with an early Sunflower prototype in comparison to dog-owner interaction in a comparable scenario, e) (Syrdal et al., 2010). For different expressions of Sunflower see (the picture gallery) and (a video).

36.8 Robots as Social Mediators

Above we discussed the role of robots as service providers, companions and 'helpers'. A complementary view of robots is to consider their role as social mediators — machines that help people to connect with each other. Such robots are not meant to replace or complement humans and their work; instead, their key role is helping people to engage with others. One area where robotic social mediators have been investigated is the domain of robot-assisted therapy (RAT) for children with autism.

Autism is a lifelong developmental disorder characterized by impairments in communication, social interaction and imagination and fantasy (often referred to as the triad of impairments; Wing, 1996) as well as restricted interests and stereotypical behaviours. Autism is a spectrum disorder and we find large individual differences in how autism may manifest itself in a particular child (for diagnostic criteria see DSM IV, 2000). The exact causes of autism are still under investigation, and at present no cure exists. A variety of therapeutic approaches exist, and using robots or other computer technology could complement these existing approaches. The prevalence rate for autism spectrum disorders is often reported as around 1 in 100 but statistical data vary.

While in 1979 Weir and Emanuel had encouraging results with one child with autism using a button box to control a LOGO Turtle from a distance, the use of interactive, social robots as therapeutic tools was first introduced by the present author (Dautenhahn (1999)) as part of the Aurora project (1998, ongoing). Very early in this work the concept of a social mediator for children with autism was investigated, with the aim to encourage interaction between children with autism and other people. The use of robots for therapeutic or diagnostic applications has rapidly grown over the past few years, see recent review articles which show the breadth of this research field and the number of active research groups (Diehl et al., 2012, Scassellati et al. 2012), compared to an earlier review (Dautenhahn & Werry, 2004).

In the earliest work of robots as social mediators for children with autism, Werry et al. (2001) and the present author (Dautenhahn 2003) gave examples of trials with pairs of children who started interacting with each other in a scenario where they had to share an autonomous, mobile robot that they could play with. Work with the humanoid robot Robota (Billard et al. 2006) later showed that the robot could encourage children with autism to interact with each other, as well as a co-present experimenter (Robins et al. 2004; Robins et al. 2005a). Note, the role of a robotic social mediator is not to replace, but to facilitate human contact (Robins et al., 2005a,b, 2006). Similarly, recent work with the minimally expressive humanoid robot KASPAR discusses the robot's role as a salient object that mediates and encourages interaction between the children and co-present adults (Robins et al, 2009; Iacono et al., 2011). Figures 13 to 16 give examples of trials conducted by Dr. Ben Robins where robots have been used as social mediators.

A key future challenge of robots as social mediators is to investigate how robots can adapt in real-time to different users. Francois et al. (2009) provide a proof-of-concept study showing how an AIBO robot can adapt to different interaction styles of children with autism playing with it, see also a recent article by Bekele et al., (2013).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.13 A-B: KASPAR as a social mediator for children with autism. Two boys playing an imitation game, one child controls the robot's expressions, the other child has to imitate KASPAR, then the children switch roles.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.14: Two children with autism enjoying the imitation game with KASPAR. One child uses a remote control to make KASPAR produce gestures and body postures; the role of the second child is to imitate KASPAR. After a while the roles are switched.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.15 A-B: Sharing with another person (an adult on the left, another child on the right) while playing games with KASPAR.

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Author/Copyright holder: Courtesy of Kerstin Dautenhahn. Copyright terms and licence: CC-Att-SA (Creative Commons Attribution-ShareAlike 3.0 Unported).

Figure 36.16 A-B-C-D: Two children with autism enjoying a collaborative game with Robota. The robot is remotely controlled by the experimenter. Robota will only move and adopt a certain posture if both children simultaneously adopt this posture. Findings showed that this provided a strong incentive for the children to coordinate their movements.

The second example area for robots being used as social mediators concerns remote human-human interaction.

While in robotics research touch sensors have been used widely e.g. allowing robots to avoid collisions or to pick up objects, the social dimension of human-robot touch has only recently attracted attention. Humans are born social and tactile creatures. Seeking out contact with the world, including the social world, is key to learning about oneself, the environment, others, and relationships we have with the world. Through tactile interaction we develop cognitive, social and emotional skills, and attachment with others. Tactile interaction is the most basic form of how humans communicate with each other (Hertenstein, 2006). Studies have shown the devastating effects that deprivation of touch in early childhood can have (e.g. Davis, 1999).

Social robots are usually equipped with tactile sensors, in order to encourage play and allow the robot to respond to human touch, e.g. AIBO (Sony), Pleo (Ugobe), PARO (Shibata et al., 2012). Using tactile HRI to support human-human communication over distance illustrates the role a robot could play in order to mediate human contact (Mueller et al., 2005; Lee at al. 2008; The et al., 2008; Papadopoulos et al. 2012a,b).

To illustrate this research direction, Fotios Papadopoulos has investigated how autonomous AIBO robots (Sony) could mediate distant communication between two people engaging in online game activities and interaction scenarios. Here, the long-term goal is to develop robots as social mediators that can assist human-human communication in remote interaction scenarios, in order to support, for example, friends and family members who are temporarily or long term prevented from face-to-face interaction. One study compared how people communicate with each other through a communication system named AiBone involving video communication and interaction with and through an AIBO robot with a setting not involving any robots and using standard computer interfaces instead (Papadopoulos et al., 2012). The experiment involved twenty pairs of participants who communicated using video conference software. Findings showed that participants expressed more social cues when using the robot, and shared more of their game experiences with each other. However, results also show that in terms of efficiency of how to perform the tasks (navigating a maze), users performed better without the robot. These results show a careful balance and trade-off between efficiency of interaction and communication modes, and their social relevance in terms of mediating human-human contact and supporting relationships. A second experiment used a less competitive collaborative game called AIBOStory. Using the remote interactive story-telling system participants could collaboratively create and share common stories through an integrated, autonomous robot companion acting as a social mediator between two remotely located people. Following an initial pilot study, the main experiment studied long-term interactions of 10 pairs of participants using AIBOStory. Results were compared with a condition not involving any physical robot. Results suggests user preferences towards the robot mode, thus supporting the notion that physical robots in the role of social mediators, affording touch-based human-robot interaction and embedded in a remote human-human communication scenario, may improve communication and interaction between people (Papadopoulos, 2012b).

The use of robots as social mediators is different from the approach of considering robots as 'permanent' tools or companions — a mediator is no longer needed once mediation has been successful. For example, a child who has learnt all it can learn from a robotic mediator will no longer need the robot; a couple being separated for a few months will not need remote communication technology any more once they are reunited. Thus, the ultimate goal of a robotic mediator would be to disappear eventually, after the 'job' has been done.

36.9 HRI - there is a place for non-humanoid robots

It is often assumed as 'given' (e.g. not reflected upon) that the ultimate goal for designers of robots for human-inhabited environments is to develop humanoid robots, i.e. robots with a human-like shape, 2 legs, 2 arms, a head, social behaviour and communication abilities similar to human beings. Different arguments are often provided, some technical, others non-technical:

humanoid robots would be able to operate machines and work in environments that originally were designed for humans, e.g. the humanoid robot would be able to open our washing machine and use our tool box. This would be in contrasted to robots that require a pre-engineered environment.

in many applications robots are meant to be used in tasks that require human-like body shapes, e.g. arms to manipulate objects, legs to walk over uneven terrain etc.

the assumption that humanoid robots would have greater acceptability by people, that they mind 'blend in' better, that people would prefer to interact with them. It is argued that people would be able to more easily predict and respond to the robot's behaviour due to its familiarity with human motion and behaviour, and predictability may contribute to safety.

the assumption that those robots would fulfill better human-like tasks, e.g. operating machinery and functioning in an environment designed for people, or for the purpose of a robot carrying out human-like tasks, e.g. a companion robots assisting people in their homes or in a hospital our care home

Likewise, in the domain of life-like agents, e.g. virtual characters, a similar tendency towards human-like agents can be found. Previously, I described this tendency as the 'life-like agent hypothesis' (Dautenhahn, 1999):

"Artificial social agents (robotic or software) which are supposed to interact with humans are most successfully designed by imitating life, i.e. making the agents mimic as closely as possible animals, in particular humans. This comprises both 'shallow' approaches focusing on the presentation and believability of the agents, as well as 'deep' architectures which attempt to model faithfully animal cognition and intelligence. Such life-like agents are desirable since

The agents are supposed to act on behalf of or in collaboration with humans; they adopt roles and fulfill tasks normally done by humans, thus they require human forms of (social) intelligence.

Users prefer to interact ideally with other humans and less ideally with human-like agents. Thus, life-like agents can naturally be integrated in human work and entertainment environment, e.g. as assistants or pets.

Life-like agents can serve as models for the scientific investigation of animal behaviour and animal minds".

(Dautenhahn, 1999)

Argument (3) presented above easily translates to robotic agent and companions, since these may be used to study human and animal behaviour, cognition and development (MacDorman and Ishiguro, 2006). Clearly, the humanoid robot is an exciting area of research, not only for those researchers interested in the technological aspects but also, importantly, for those interested in developing robots with human-like cognition; the goal would be to develop advanced robots, or to use the robots as tools for the study of human cognition and development (cf. the iCub which exemplifies this work, e.g. Metta et al., 2010; Lyon et al. 2012). When trying to achieve human-like cognition, it is best to choose a humanoid platform, due to the constraints and interdependencies of animal minds and bodies (Pfeifer, 2007). Precursors of this work can be found in Adaptive Behaviour and Artificial Life research using robots as models to understand biological systems (e.g. Webb, 2001; Ijspeert et al., 2005).

However, arguments (1) and (2) are problematic, for the following reasons:

Firstly, while humans have a natural tendency to anthropomorphize the world and to engage even with non-animate objects (such as robots) in a social manner (e.g. Reeves and Nass, 1996; Duffy, 2003), a humanoid shape often evokes expectations concerning the robot's ability, e.g. human-like hands and fingers suggest that the robot is able to manipulate objects in the same way humans can, a head with eyes suggests that the robot has advanced sensory abilities e.g. vision, a robot that produces speech is expected also to understand when spoken to. More generally, a human-like form and human-like behaviour is associated with human-level intelligence and general knowledge, as well as human-like social, communicative and empathic understanding. Due to limitations both in robotics technology and in our understanding of how to create human-like levels of intelligence and cognition, in interaction with a robot people quickly realize the robot's limitations, which can cause frustration and disappointment.

Secondly, if a non-humanoid shape can fulfill the robot's envisaged function, then this may be the most efficient as well as the most acceptable form. For example, the autonomous vacuum cleaning robot Roomba (iRobot) has been well accepted by users as an autonomous, but clearly non-humanoid robot. Some users may attribute personality to it, but the functional shape of the robot clearly signifies its robotic nature, and indeed few owners have been shown to treat the robot as a social being (Sung et al., 2007, 2008). Thus, rather than trying to use a humanoid robot operating a vacuum cleaner in a human-like manner (which is very hard to implement), an alternative efficient and acceptable solution has been found. Similarly, the ironing robot built by Siemens (Dressman) does not try to replicate the way humans iron a shirt but finds an alternative, technologically simpler solution.

Building humanoids which operate and behave in a human-like manner is technologically highly challenging and costly in terms of time and effort required, and it is unclear when such human-likeness may be achieved (if ever)in future. But even if such robots were around, would we want them to replace e.g. the Roomba? The current tendency to focus on humanoid robots in HRI and robotics may be driven by scientific curiosity, but it is advisable to consider the whole design space of robots, and how the robot's design may be very suitable for particular tasks or application areas. Non-humanoid, often special purpose machines, such as the Roomba, may provide cheap and robust solutions to real-life needs, i.e. to get the floor cleaned, in particular for tasks that involve little human contact. For tasks that do involve a significant amount of human-robot interaction, some humanoid characteristics may add to the robot's acceptance and success as an interactive machine, and may thus be justified better. Note, the design space of robots is huge, and 'humanoid' does not necessarily mean 'as closely as possible resembling a human'. A humanoid robot such as Autom (2013), designed as a weight loss coach has clearly human-like features, but very simplified features, more reminiscent of a cartoon-design. On the other end of the spectrum towards human-like appearance we find the androids developed by Hiroshi Ishiguro and his team (http://www.geminoid.jp/en/index.html), or David Hanson's robots (2013). However, in android technology the limitations are clearly visible in terms of producing human-like motor control, cognition and interactive skills. Androids have been proposed, though, as tools to investigate human cognition (MacDorman and Ishiguro, 2006).

Thus, social robots do not necessarily need to 'be like us'; they do not need to behave or look like us, but they need to do their jobs well, integrate into our human culture and provide an acceptable, enjoyable and safe interaction experience.

36.10 HRI - Being safe