Imagine you’re a business owner who wants to know what’s working and what’s not on your website—and, oh yes, where you need improvements. Sure, there are a bunch of research methods you can try—like user interviews, usability tests, and A/B testing, but read on and see how a user experience (UX) survey helps gather valuable insights in and pinpoint the areas you’ll need to work on to get a site—or any other digital product or service for that matter—in tip-top shape!

See how UX surveys can offer actionable insights, presenting qualitative data that informs decisions.

Table of contents

- What are UX Surveys?

- When and Why Should You Conduct a UX Survey?

- 6 UX Survey Best Practices From Experts

- The Ultimate Guide to Conduct a UX Survey

- Step 1: Define Your Objectives

- Step 2: Identify Your Target Audience

- Step 3: Craft Engaging Questions for the Questionnaire

- Step 4: Select a Tool For the UX Research Survey

- Step 5: Pilot the Survey

- Step 6: Launch the Survey

- Step 7: Analyze and Interpret the Results

- Step 8: Share Insights and Implement Changes

- The 20 Best User Experience Survey Questions

- What are Some Great Free UX Survey Templates?

- Final Thoughts—And The Take Away

What are UX Surveys?

UX surveys—or user experience surveys—help you gather information about users’ feelings, thoughts, and behaviors related to a product or service they encounter, and online surveys form a part of the broader field of usability surveys. They focus on understanding how users interact with a system, application, or website, and they’re helpful sources of qualitative data that can give you actionable insights to, in turn, inform decisions that can give customer satisfaction a big boost as you create a more user-centered design.

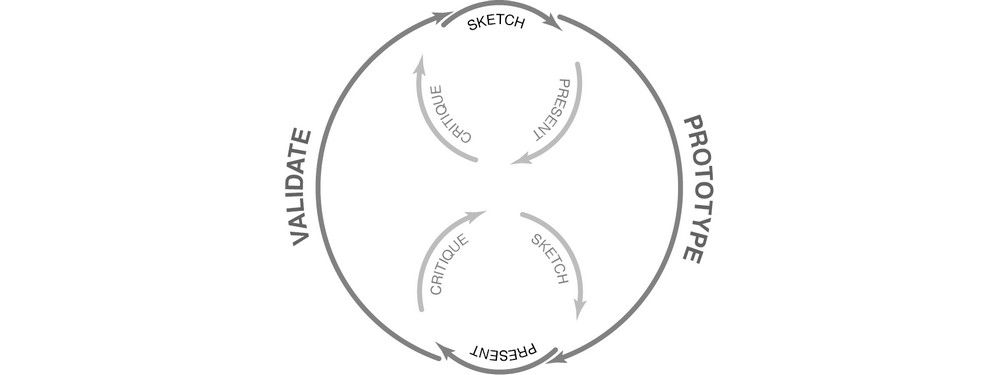

© Interaction Design Foundation, CC BY-SA 4.0

1. Customer Effort Score Surveys (CES)

CES surveys assess how simple it is for customers to complete tasks with what your company offers them—and it’s like a score that tells you if using your product or getting help from your service team was a breeze or a struggle for the customer. People appreciate straightforward questions, and you, dear UX researcher, will be glad of straightforward feedback—and less time you need to spend on it. Time is precious, so spending less effort—and time—on fixing issues is better. Note that ease of experience can be more revealing than overall satisfaction, and experts use the Customer Effort Score as a reliable data source.

For instance, after a customer service interaction, the question could be:

"How easy was resolving your issue with our customer support?"

Very Difficult

Difficult

Moderate

Easy

Very Easy

2. Customer Satisfaction Surveys (CSAT)

A CSAT survey measures how happy customers are with your company—and the main question is, “How satisfied are you with our service?”. Answers range from 1, meaning “very dissatisfied,” to 5, which indicates “very satisfied.”

CSAT surveys focus on individual interactions—things like purchasing or using customer support—and they use numeric scales to track satisfaction levels over time. These surveys help you understand what your customers’ needs are like—and pinpoint issues with your products or services. They allow you to categorize customers based on their satisfaction levels, too, and that helps with targeted improvements.

3. Net Promoter Score Surveys (NPS)

NPS surveys are simple and quick since they’ve got just one question: “On a scale from 0 to 10, how likely are you to recommend this product/company to a friend or colleague?”. From the score, you can do respondent segmentation and divide them into one of three categories:

First are the Promoters (Score 9-10)—and they’re your biggest fans, the ones who’re likely to recommend your product. Second are the Passives (Score 7-8)—and these folks find your product/service satisfactory enough, sure, but the loyalty is not there, so watch out for them as they could switch to competitors with ease. Third are the Detractors (Score 0-6)—and speaking of “watch out,” they’re unhappy customers, and—in the golden age of feedback with everybody being able to write about anything—they’re ones who could harm your brand through negative word-of-mouth.

To get a good big-picture view, you can calculate the NPS score by subtracting the Detractors’ percentage from the Promoters’—it’ll give you a good snapshot of customer loyalty and areas for improvement.

4. Close-ended Questions for Quantitative Research

Well-designed, closed-ended questions are nice and easy to answer—and they’re ones where users pick from predefined options like checkboxes, scales, or radio buttons. Surveys such as these are suitable to collect data with—like in exit surveys asking users about their shopping experience—and the answers provide actionable data, like customer preferences or standard problems. You can “plug” insights you get into your redesign efforts.

Get more insights on quantitative research in this course on Data-driven Design.

You may ask,

"How satisfied are you with our delivery speed?"

The options could be:

Very Satisfied

Satisfied

Neutral

Dissatisfied

Very Dissatisfied

For closed-ended ones, users don’t need to type out their thoughts—they’ve got to just pick an option that best describes their feelings. It’s a “win-win,” and it’s both quick for the user and easy for the company to analyze.

5. Open-ended Questions for Qualitative User Research

While closed-ended questions give up fixed options for quick responses (and they’re a great convenience because of it), open-ended questions allow for more detailed, free-form answers. They’re questions that ask for written responses, and so they dig deeper into how users feel—and what they expect from a brand and its product or service. It may take more time to analyze the responses you get in from this type of survey, but they’re worth the “work” and are valuable because they offer nuanced insights.

For example, ask a question like “What feature do you wish we had?” and what you get back can lead to ideas for product enhancements that meet users’ needs far better.

When and Why Should You Conduct a UX Survey?

Conducting a UX survey is a strategic decision to understand various aspects of user interaction with a product or service. Here are vital scenarios and reasons for implementing them:

1. To Evaluate Features and Make Enhancements

You may find UX surveys better suited to assess existing products than ones you intend to develop, and they’re surveys that can gather insights on how well your target audience receives a feature or service. Feedback from such surveys can be a great help to guide adjustments or additions to your product—like if customers think an existing feature could do better on the functionality side, you can leverage that valuable data to get your product more in line with user needs.

2. To Identify Pain Points

If you’re going to create a user-friendly experience, it’s vital that you spot pain points and design to alleviate problems. UX surveys provide direct feedback from users about what’s troubling them, and issues could be ones you’re unaware of that make the customer experience less enjoyable or less efficient.

For example, users might point out that they find your checkout process too complicated or that they’ve got trouble finding specific information on your website. Treat insights of this kind like gold; they give you specific areas to focus your improvement efforts on and make UX strategy decisions that will make the best of how users interact with your product or service. Addressing these issues helps you fix problems and show users you value and act upon their feedback.

3. To Assess Customer Satisfaction

Customer satisfaction is crucial for any business—and dissatisfaction can be a sore point on public feedback. A well-timed UX survey can gauge how well you meet customer expectations after a critical interaction—such as a purchase or customer service call—and there’s value to be had in the “good” and the “bad.” Positive feedback helps identify vital areas, while negative feedback highlights issues that need attention.

4. To Evaluate Customer Loyalty

For sure, long-term success hinges on customer loyalty, and NPS surveys—a type of UX survey—help gauge this. When you identify promoters, passives, and detractors, it can help you tailor customer retention and referral strategies, and a dip in loyalty scores is an alert to dig deeper into potential issues and find out how you can solve them.

5. To Journey Map

Journey mapping visually represents a user’s interactions with your product or service. It tracks the entire experience—from the first touchpoint to the final interaction—and a well-designed UX survey can provide insights at multiple stages of this journey to measure things like ease of use. Are customers finding it simple to navigate from one section of your website to another?

CSAT surveys are great to check satisfaction at critical touchpoints with—like purchase or support—and fill you in on vital details about fixes to make. Open-ended questions can bring on qualitative insights into why users make specific choices, and the answers can fill gaps in the journey map that analytics data mightn’t.

6. To Help during Major Transitions or Updates

If you’re planning a significant change, such as a rebrand or major update, a UX survey is invaluable to help assess customer sentiment and expectations with—before you roll out the differences. Getting survey data in means you can make adjustments that do align with customer needs—and you can reduce the risk of any negative backlash searing your brand.

7. To Make Continuous Improvements

It may sound like a drag, but the need for improvement never stops. Regular UX surveys create a feedback loop to help you track user sentiment and performance metrics—and they’re powerful tools that allow for ongoing adjustments based on real-world usage.

For example, if you notice a slight dip in satisfaction scores for your app usability, you can investigate and go in and make adjustments before it flares up into a big issue.

6 UX Survey Best Practices From Experts

© Interaction Design Foundation, CC BY-SA 4.0

1. Make it Quick

Time is precious for pretty much everyone—so show your respondents you value theirs (long surveys can be a real turn-off). A quick and concise survey ensures that the participant stays engaged—so do focus on just the essential questions and leave out any unnecessary ones.

Steps You Can Take

Limit your survey to 5-10 essential questions. Use clear and concise language. Preview the survey with a friend or colleague so you get feedback on the length and clarity.

2. Keep It Relevant

It’s vital to keep relevance going in your survey questions—and on-point questions will get you valuable data in return. If questions stray off-topic, though, they’ll risk irritating or baffling participants. Keep questions focused to ensure you get the insights for your goals.

Steps You Can Take

Define your target audience and goals before you write any questions—and don’t have any generic questions that don’t relate to the product or service when you do get writing them. Focus on specific user experiences that align with what your objectives are. Provide not applicable (N/A) / don’t know answers for all closed questions.

3. Avoid Bias

Bias—often an unfortunate “byproduct” of being human—can distort the results and lead to misguided conclusions. The objective framing of questions helps you collect unbiased responses, and some of the common biases include:

Question order bias: Affects responses based on the sequence of questions.

Confirmation bias: Where you just ask questions that affirm what you already believe.

Primacy bias: People choose the first options that come up.

Recency bias: People are more influenced by their last experience.

Hindsight bias: Respondents say events were foreseeable.

Assumption bias: Assumes respondents know certain information.

Clustering bias: People see patterns where none exist.

Steps You Can Take

Avoid leading questions, and be sure to use neutral language that doesn’t put any slant or spin on what you’re asking. Consider asking an expert to review your questions to weed out potential bias. Test the survey on a small group of “users” before launching it to see how unbiased it is.

4. Mix Up Your Question Types

While multiple-choice and rating scales excel at gathering numerical data—or more quantitative research data—open-ended questions offer rich, qualitative insights. Get the right blend and you can get a more comprehensive view of customer sentiment.

Steps You Can Take

It’s a great idea to use a mixture of types of questions according to the information you need—open-ended questions for in-depth insights and multiple-choice ones for quick feedback. Think about using scale questions to gauge user satisfaction or preferences.

5. Ensure Accessibility

Accessible design is a big deal in any case, and making your survey accessible helps you capture a wide range of perspectives. If you create an accessible survey for everyone—including those with reduced abilities—you’ll get a more complete and diverse set of insights to gear your decision-making around.

Steps You Can Take

Have easy-to-read fonts and adequate color contrast, and make sure you’ve got alternative text for images. Also make sure that users can navigate the survey using keyboard controls (not everyone uses a mouse). Test the survey’s accessibility features, and—on the subject of keeping things nice and accessible—avoid complex layouts and don’t have matrix-style questions in there.

Watch our video on accessibility to learn more about why it’s so important.

See the W3’s Web Content Accessibility Guidelines for more details.

6. Maintain Privacy

Make participants’ privacy a priority—it’s critical to do that if they’re going to build trust in your brand. When people feel confident that their data is safe, they’ll be more ready to engage in full in your survey, and a nifty “bonus” is how a strong privacy policy doesn’t just meet legal standards and boost participation rates but enriches the quality of your insights, too.

Steps You Can Take

State your privacy policy at the start of the survey and be clear about it. Use secure platforms for conducting the survey. Assure participants that their responses will remain confidential—and honor that. Last—but not least—put sensitive or personal questions towards the end.

The Ultimate Guide to Conduct a UX Survey

© Interaction Design Foundation, CC BY-SA 4.0

Step 1: Define Your Objectives

Defining clear objectives sets the stage for a successful UX survey, kicks off the roadmap for the best survey possible, and it helps you understand the key insights you’re after. To zero in on what you’re aiming to discover, consider these questions:

What is the main goal? Understand if you want to measure user satisfaction or you want to focus on something else.

Which user behaviors are relevant? Is the survey targeting frequent users, new users, or both?

What are the key metrics? Do you want to look at completion rates, time spent, or other indicators?

New feature opinions: Are you seeking input on new rolled-out features?

Pain points: Are you trying to identify user frustrations and roadblocks?

It’s vital to get great clarity in the objectives, as it’ll guide every next step and ensure you align the results with your project goals. Well-defined goals will help you streamline the survey’s structure and help you craft relevant questions. The sharper focus has an added benefit in that it will help you analyze the data you collect later on.

Step 2: Identify Your Target Audience

You need to know whose opinions you want, and how to write questions that they can relate—and respond—to well, so it’s more than a little important to identify your target audience, and there are several reasons.

Product awareness: Gauge how much your audience knows about your product, as this will shape the depth and detail of questions.

Interests: Understand what topics engage your audience, and you can use that insight to make questions interesting.

Language: A professional audience may understand industry jargon, but a general audience may well not—so choose words with care.

Region: Geography can affect preferences and opinions, so do localize questions if you need to.

Step 3: Craft Engaging Questions for the Questionnaire

There’s an art to writing good questions for surveys—and what you ask are the heart of your survey, so if you write engaging, clear, and unbiased ones, they’ll bring back the insights you need from respondents. Your questions have got to captivate the users’ interest and guide them through the survey—so, don’t bog them down with boring, irrelevant, or ambiguous words. Treat your survey as a design in itself, and give it great UX and a “seamless experience”!

Use different question types—like multiple-choice ones for quick feedback or open-enders to get deeper insights back. Use simple language, don’t use jargon, and ensure each question serves a clear purpose. Be mindful of potential biases—which can come up without you noticing—and keep the questions neutral.

Step 4: Select a Tool For the UX Research Survey

It’s vital to pick the right tool for your UX survey if you’re going to get the best in data collection and analysis. A Google Form offers you a quicker way to get started with UX surveys—and here’s why:

Ease of use: Google Forms is user-friendly, and even if you’re not tech-savvy, you can create a survey quickly.

Customization: It offers various themes and allows question branching based on prior answers.

Integration: Google Forms integrates with other Google services like Google Sheets for real-time data tracking.

Free: For basic features, it’s free of charge.

Data analysis: It offers basic analytics like pie charts and bar graphs for quick insights.

You can also use specialized UX research tools like SurveyMonkey with more advanced features, but whichever one you go with, consider what your objectives are and what your target audience need, and then choose a tool that best serves those needs.

Step 5: Pilot the Survey

Pilot testing is a very valuable step for refining the UX survey—and that’s since it gives you a chance to uncover unforeseen issues with the survey design, questions, or technology; things you really wouldn’t notice otherwise. It’s a good idea to recruit participants in small numbers so you can test the survey.

You can ask internal team members for help or contact professionals via LinkedIn, and use this test survey to understand their experience and make necessary adjustments. This can make the difference between a good survey and a great one—and, as stated before, treat the survey like you would a design. When you pilot test well, it helps iron out the kinks and ensures a smoother product experience for the primary audience.

Step 6: Launch the Survey

Well done—it’s launch time! But launching the survey is more than just making it live—you’ll want to choose the proper channels, timing, and even incentives to get the best results. And when you promote the survey, it ensures that it reaches your intended audience and encourages participation from them. Their time is valuable, remember, so consider the time of day, week, and even platform that aligns with your audience. You’ll need to plan every aspect of the launch to maximize participation so you do get it launched well with “All systems go!”.

Step 7: Analyze and Interpret the Results

The results are in—now comes the “fun” part! Data analysis transforms raw data into valuable insights, so be sure to use analytical tools to sort, filter, and interpret the data in the context of your objectives. Look for patterns and correlations—sure—but also keep an eye out for unexpected discoveries, as they may well pop up.

Your interpretation of the data you got back should lead to actionable insights that guide you (and your team/s) to make substantial product or service improvements—and this step transforms the effort of surveying real value for your project.

Step 8: Share Insights and Implement Changes

Last—but not least—share your findings and implement changes to complete the process, and create comprehensive reports and engage stakeholders with the insights to get them on board and on the same page. The reports will need to be clear so the right ideas get communicated—since many stakeholders will need data “translated” for them. Sharing fosters a shared understanding and sets the stage for informed decisions to bring the right fixes and improvements to where they’re needed.

Plan and iterate on improvements based on the insights you get, and be sure to use the learnings for continuous enhancement. Keep your radar on for any insights that might emerge to help you tweak the user experience of your survey in any way possible so it does its job and brings you back info you can use well.

The 20 Best User Experience Survey Questions

These questions form a comprehensive framework for understanding various aspects of the user experience—just be sure to use only a few of these to keep response rates high.

“How did you find our website/app?”

This question helps assess the effectiveness of your marketing channels, and it shows you where people first encounter your brand. While Google Analytics reveals traffic from specific sources like AdWords or Facebook, it needs to track direct traffic. Know this and you can fine-tune your marketing strategy.

“What was your primary goal in visiting our site today? Did you achieve it?”

So, there are two questions—but, anyway, it focuses on why users visit and if the site meets their needs and, from that, helps identify gaps in content or functionality.

“How easy was it to navigate our site?”

This question looks at how effective your website is, and you’re on the right track if people find it easy to navigate (well done!). If not, well, it’s a red flag and your site’s layout or functionality may need tweaks—which you’ll need to look into with due care and attention.

“What features did you use most?”

This question puts it out there as to which parts of your product or service are most valuable to customers. If the majority say they often use a specific feature, then that’s a pivotal strength to highlight in marketing.

“Were there any features that needed to be clarified or easier to use?”

This question zeroes in on potential weak spots in your product design or functionality—and can leave some things wide open for “attack” but for good reason. If a feature keeps getting labeled as confusing or complicated to use, yes, it needs improvement.

“How would you rate your overall experience?”

Provides a general impression of user satisfaction—the truth’s often in a number or a word or two.

“What would you change about our website or app?”

This question invites suggestions for improving your digital solution—and this “suggestion box” moment gives users a voice in the development process. If they care as much as they should and something has come up, you should hear about it.

“How likely are you to recommend our product to a friend or colleague?”

Recommendations measure customer satisfaction and loyalty, and it’s common for pop-up surveys to use this question based on a widely used metric called the Net Promoter Score (NPS). A high likelihood to recommend means that—sure—customers are happy and likely to become brand advocates.

“What other products or services would you like us to offer?”

This question taps into unmet customer needs and wants—another golden “suggestion box” moment! Responses about this can expose gaps in your current products—or services—and even go beyond feature gaps and inspire new products themselves. Trust in your users and customers, as some of them may know what they’d like to see and can voice it well—and if they didn’t care, they wouldn’t write anything, right?

“Did you encounter any technical issues?”

Technical issues—like bugs, error messages, or crashes—can affect customer satisfaction for the worse (and sometimes to the point they're steamed at you), so you’ll need to hear about it so you can change things (for the better!).

“What is your preferred payment/delivery method?”

It may seem trivial, but some customers will only buy if their preferred payment method is available (and go to a competitor if they have it). Money talks, so you’ll need to understand the popular payment options that resonate with your target audience and see about going with what they want.

“What is your preferred method of contact for support?”

This question seeks to know how customers prefer to reach out for help—and many people will want (or not want) to be emailed, phoned, texted, or what have you as the only way to get hold of them. Understand this and you can help your brand optimize their customer service channels.

“How would you describe our product in one sentence?”

This question aims to capture a concise customer impression of your product, and one-sentence descriptions can reveal key strengths or weaknesses. Note that it’s “one sentence” and not “one word”—as the latter might open up confusing “cans of worms” (or references to other substances) and even praise mightn’t shed light on the all-important “why” factor.

“How does our product compare to similar ones in the market?”

This question seeks to understand your product’s competitive edge or shortcomings and has the brand standing beside its rivals for “inspection time.” Responses can tell you where you excel or lag behind other market players and give you insights on how to up your game or make those strengths even more accessible.

“Were our support resources (FAQs, live chat) helpful?”

You need to understand the effectiveness of your customer support tools—like FAQs and live chat. If most people find these resources helpful, great—they validate your support strategy. If not, it’s a cue to improve these areas, be it better Frequently Asked Questions, better live-support training, or what have you. Support is a big—no, a huge—deal, and it can determine how much your brand is worth to the customers who contact it for help, so make sure you offer assistance that benefits your customers and respects both them and the time that makes up their short lives.

“How could our product better meet your needs in the future?”

This question may be about the future, but it seizes on the present moment by sending the message to customers that your brand values them and what they think. Whether it’s about adding new features or refining existing ones, the feedback can help “big-time” with roadmap planning. If multiple customers highlight the same issue (like with pricing), that’s a vital sign that needs attention—before they “jump ship” to a competitor who’s cheaper.

“How did you find the speed of the site?”

This question evaluates how your site speed impacts user satisfaction—and, of course, slow loading frustrates users and may even lead them to abandon the site. If multiple people report this issue, you’ll need to get something done for optimization—and remember most users access digital products on mobile devices with mobile UX needs.

“What language options would you prefer for our website/app?”

This question identifies the language preferences of your user base—not everyone will be adept or comfortable with English, for example. If a sizeable portion prefers another language, it makes sense to offer that option—be it Spanish, Russian, Chinese, etc. (though we might leave out Esperanto). Another “perk” of offering text in other tongues is how it won’t just broaden your reach but make your platform more inclusive as well.

“Would you like a follow-up from our team regarding your feedback?”

This question is another one that shows you respect your respondents’ time—and they’ll appreciate the courtesy. Get a “yes” from them and it pretty much means the respondent is engaged and open to dialogue, which you can take as a sign of higher loyalty or interest. But a “no” means they provided feedback, all right, but aren’t looking for a discussion—so just leave it at that and leave them alone.

“Would you be interested in future updates or newsletters?”

For this one, it’s an easy binary response on how interested customers are in staying connected with your brand, with a “yes” meaning a “happy camper.” Wouldn’t it be wonderful if they were all satisfied customers who’re more likely to engage with future offerings, but—reality check—there are going to be “no’s” too. A “no” could suggest that they’re not fully satisfied—or not interested in long-term engagement—so just take it at face value and respect their wishes.

What are Some Great Free UX Survey Templates?

Find out what clients think about your business, and use this form as a case study to gather thoughts on customer service and more. Make changes to the template so you focus on specific aspects of customer interaction.

Experts have made this ready-to-use template to improve your software’s Net Promoter Score (NPS). Use it to gather critical insights to elevate your product.

It’s easy to gauge customer loyalty with this template, where customers rate their likelihood of recommending you from 0 to 10—and you can adapt the template to explore additional areas.

Assess the performance of your customer service team—and adapt the survey to delve into aspects which you’re particularly interested in.

Send this brief survey out to understand customer perceptions—and it doesn’t just encourage customers to elaborate on their answers, but you can make adjustments to fit your needs too.

Use this template to collect comments on your products—and it’s nice and handy as it aims to identify issues and suggest resolutions.

Collect rapid feedback on your products, and you can see this form to get concise and actionable comments from customers.

Capture detailed information on how your customers feel about your products and services—handy for not just pinpointing specific areas for improvement but getting a good view of your brand’s “status” with them, too.

Final Thoughts—And The Take Away

UX surveys are pretty powerful tools when you’ve got the right type working for you, the right wording of questions in, and the right mindset on how to engage the people who’ll answer them. It takes everything from defining objectives to crafting engaging questions, ensuring accessibility, analyzing results, and implementing changes, but the work is worth it to zero in on the best survey possible. From the get-go, it’s vital to make sure you’ve got clear objectives keeping the survey on course—because when you understand what you want to achieve with the survey, it sets the foundation for success and guides every subsequent step for you.

Another vital thing to bear in mind is to ask relevant questions that are also engaging: ones that also show you value the time of the people who are answering them; clear, interesting, and unbiased questions that cover various facets of the user experience—a winning formula that helps in capturing genuine feedback and insights.

Last—but not least—your survey is a design in itself that needs to satisfy its users and respect them as individuals with opinions. So, make sure you ramp up the UX of it and keep the “magic” alive so they can look on your brand as one that speaks to—and advocates for—them, and is one that’s interested in retaining them as loyal customers long into a fruitful future.